Tools of the Technocracy: #2 Big Data & Artificial Intelligence

What is all this data for anyways?

By Gabriel

Published: Feb 16 2022

Tools of the Technocracy Series

Technocracy

Big Data

AI

Machine Learning

Surveillance

Digital ID

Tools of the Technocracy: #2 Big Data & Artificial Intelligence

Big data

Big data is an arms race for information and computing power. The more information and computing power an entity has the more power it can leverage against you or over others. Privacy is generally discussed in terms of protecting yourself individually, but more and more it is becoming vital to protect your information to protect everyone. By reducing how much you feed into big data, you prevent that data from being weaponized against others. How is all this data collected anyways?

Web & App analytics

Online services are very interested in where you spend your time and attention. There are a multitude of different ways trackers infect your online experience.

Browsers currently have much more fine-tuned control over what information you share with sites you visit. There are mechanisms to mitigate this. With a smartphone app however, there are much fewer ways to restrict surveillance, and they generally require more effort.

Fingerprinting

Browser Fingerprinting is a set of techniques that analyze how your browser behaves. Many technical details about your browser (such as your monitor size, language, & time zone) allow you to stand out from other users. When combined with other information, such as your IP, it can be very easily to track you as a unique user. This does not mean that you should continue to leak data. The best defense is getting more people to take their privacy and security seriously. An alternative would be to find ways to send out fake data and interactions to add noise to the dataset.

Gemini is a new protocol intended to mitigate the amount of tracking sites can do. It is a growing ecosystem and there already is a great deal of great content there. Websites on Gemini are called capsules. You do need a gemini-compatible browser to view them.

“Data driven” services

Cloud platforms and online services aim to be ‘data driven’ this means that details from every interaction are recorded so that they can ‘improve their services’. Unfortunately for their employees, clients, and partners, this data is very often collected and processed by a third party. Other times it is simply outright sold.

Many organizations use Microsoft, Google or other cloud providers to run their day-to-day affairs. This has tragic implications for the people who rely on those institutions. Your ‘private’ information could be collected by big tech firms without you ever directly using their services.

Dragnet surveillance

Simply not being a target is not enough to avoid problems. Instead of relying on tracking you as an individual, many techniques involve spying on everyone. By being able to analyze every interaction, everyone can manipulated all the time. With more and more sophisticated tools, this becomes a larger and larger threat. The state has the power to force people into using specific services. As a matter of principle the powers of the state and large corporations to collect information on people must be restricted.

Biometrics

Biometrics are special pieces of data that should be guarded the most. Such as:

- Your DNA

- Your face

- Your eyes

- Your fingerprints

- Your heartbeat

- Your gait

Essentially, anything that uniquely identifies you is not something you want your data to be able to be traced back to. The problem with biometrics is that unlike passwords or keys they can not be (easily) changed or revoked. It is much easier to change a password than get an entirely new face.

Limitations of “De-identified” data

De-identifying data is the process of removing or changing information like names and addresses in the hope that the dataset won’t allow you to uniquely identify a person. This protection, isn’t absolute. For example, location data is essentially impossible to ‘de-identify’. How many people visit your home during certain hours each day? Even if the system doesn’t list their names it’s probably not too hard to find out.

Data brokerages

When your data is collected by different parties very often they will repackage and sell out the data. There are large data brokerages with massive datasets. This means that intelligent systems (or a determined adversary) could potentially gather a near-complete picture of your life by correlating pieces from different datasets. This is a massive advantage that large firms will always have over smaller organizations.

Data breaches

It is not only your own personal security that matters, but also the security of all those who are keeping data about you. HaveIbeenPwned is a great tool to verify if your e-mail appears in any data breaches. The trouble is that any information that becomes accessible is impossible to truly erase. Those wishing to use data to control others likely do not care for ethical concerns about how it is sourced.

Artificial intelligence

AI is no longer a sci-fi concept, it is very real and very present in our lives. Artificial intelligence is fueled by big data. This creates a feedback loop that incentivizes further data collection. The more data that exists, the more data there is to abuse.

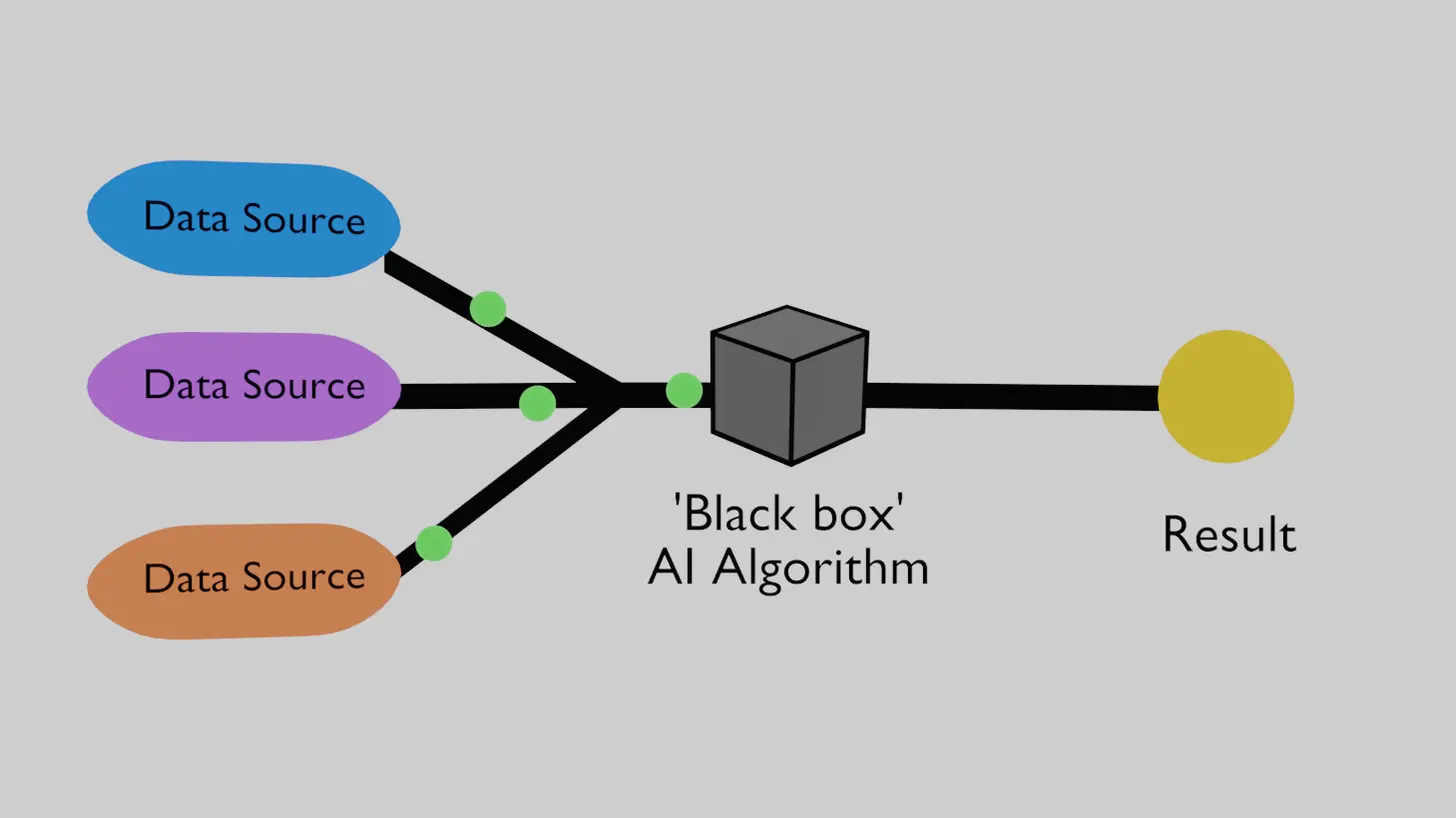

How does it work? Signals & Levers

To oversimplify a bit, an AI algorithm is given many signals as ‘inputs’ and outputs one or many results. AIs are ‘trained’ by feeding them inputs and the desired outputs. It is essentially the AIs job to learn how to produce those outputs. By adjusting the values in its ‘memory’, it will learn how to give better results. Once an AI is trained with enough data it will be able to reliably produce accurate results for new data.

A great deal of these results are derived from a properly designed network and dataset. An AI fed with data that is incomplete or biased can produce useless or incorrect results. This is why it is a horrific idea to allow AI algorithms designed to track and control you to have control over your life. These AI algorithms can work together to create more sophisticated tools.

Hard-to-predict correlations

AI algorithms shouldn’t have direct control over systems that have the capability of harming people. By giving complex AIs levers to control the real world it can be almost impossible to predict what the potential impacts can be. The potential risks to human rights or even safety can’t be overstated.

This is because no matter how powerful our machines are, or how sophisticated our algorithms are we are not able to fully simulate the human experience in our human societies. There will be blind-spots and bad incentives. They are designed by humans after-all.

These tools are impressive

This is not to say AI is useless or bad, it has many very useful and powerful applications. You can explore the possibilities with this amazing YouTube channel Two Minute Papers. The best way to use AI is to use it where there is no danger in a wrong answer. My favorite example is upscaling, generating higher resolution images from lower resolution ones.

Not all sunshine and rainbows.

The sad news is that many of these systems are in control of our very lives. There is a race to give AI algorithms complete control of what we see, hear, and remember. It is important to keep in mind that these powerful tools are constantly working in the background in the interests of powerful institutions.

It is very possible that these AI algorithms feed into each other in unintuitive ways. As the technocracy expands out their digital control the impacts may become more clear.

Advertising

The study of the humans in cyberspace has been ongoing for quite some time. The game-changer is that artificial intelligence can find subtle ways to manipulate you that even its creators couldn’t imagine. Every time you interact with an ad online you are training algorithms to learn how to grab peoples’ time and attention.

Censorship

Giving AIs the power to moderate the Internet has disastrous potential. Human beings, for all their wisdom, have failed to ever use censorship for anything good. Artificial intelligence should never be a barrier to people’s ability to access information or express themselves.

Astroturfing

Bots are also faster at engaging online than human beings. It would be trivial to use currently available technology to swarm the Internet with bots that are able to out-produce any real human input to spread propaganda. Bots already outnumber humans on social media. The usual solution to this is to demand that human beings use their real identities online, but this immediately backfires by giving AIs a list of ‘real targets’ to hit.

AI is a game-changer for the technocracy. By herding people into systems with defined goals, the systems can set incentive structures to make the herd act how the system wants. If you believe money is a powerful influence over individual people, watch when every aspect of their lives is made better or worse based off how they are judged by these systems.

Plausible deniability

An underestimated part of these AI algorithms is that with datasets on everyone they can still target people individually. While this specific case may be an isolated incident, the capability exists to manipulate individuals more subtly. This can impact those who may be more vulnerable, or less likely to speak out.

Perception management

As top-down control drives more and more of what people see and experience this allows AIs to learn how to manipulate people’s behavior in incalculable ways. As we allow fewer systems to take total control over information, those who run those systems can actively brainwash the public for any purpose.

Perception management is a weapon primarily aimed at people’s subconscious minds. There is immense opportunity to leverage people’s very own biology against themselves. Fear, disgust and anger are some of the strongest emotions that can be used to influence people.

Digital Gulags

‘Social credit scores’ don’t need to be binary. Everything in your life can be put on a sliding scale. If you behave ‘well’ the system can reward you with:

- A more pleasant social media feed

- More favorable transaction costs and terms

- Preferential resolutions in disputes

- Access to more computing power

- Access to more energy

- Better quality food

If the system decides you’re not doing what it wants it can punish you with:

- More negative news

- Less representation in disputes

- Less computing power

- Energy rationing

- Lower quality food

Once allowed, this system wouldn’t simply turn people into slaves, it would hijack evolution to transform what once were human beings into different creatures all-together. Sounds a lot like Roko’s Basilisk….

Solutions

Take privacy and security seriously

Consider switching away from tools and technology that track you as part of their business model. It is worth your time to find hardware, operating systems, and programs that aren’t proactively reporting on your behavior. This doesn’t have to be all-at-once but can be done gradually. Every bit helps and there is a gradient of success. Another option is simply to modify your lifestyle to find ways to rely less on digital systems.

Limit your interaction with data-driven services

This doesn’t mean you have to immediately close your bank account or quit your job. Using your influence as a customer or employee can help shift institutions in a better direction. Be aware of how these systems work and how you can mitigate their effects on your life. In any instance where you can replace a service or tool that is tracking you with one that isn’t you’re making great progress. Consider having your data deleted from unused sites. Try shopping in store, rather than from an online store-front.

Switching away from Algorithmically manipulated social media towards better alternatives such as pleroma & mastodon can help a great deal. Gab also doesn’t manipulate what appears in your feed. Ensure that you’re bookmarking important sites. RSS feeds are also critical in getting information without censorship or moderation.

Support open data

AI can be used for good! Open source tools and open datasets level the playing-field a great deal. An interesting project in this space is Mozilla Common Voice. It is difficult for this kind of work to compete with corporate or state-level initiatives, but it would be much harder to hide private data in a public dataset.

Consider getting involved locally, if there are local groups interested in privacy rights as well as security you can work to influence local governments and institutions to better protect people’s privacy.

Sharing is caring!

Please send this post to anyone you think might be interested.

If you haven't already, don't forget to bookmark this site!

You can always subscribe with the RSS feed or get posts sent to your inbox via Substack.